Commit

•

332d34b

1

Parent(s):

40832f4

Upload folder using huggingface_hub

Browse files- .gitignore +3 -0

- LICENSE +84 -0

- README.md +170 -3

- README_en.md +167 -0

- config.json +45 -0

- configuration.json +1 -0

- configuration_chatglm.py +58 -0

- generation_config.json +13 -0

- model-00001-of-00010.safetensors +3 -0

- model-00002-of-00010.safetensors +3 -0

- model-00003-of-00010.safetensors +3 -0

- model-00004-of-00010.safetensors +3 -0

- model-00005-of-00010.safetensors +3 -0

- model-00006-of-00010.safetensors +3 -0

- model-00007-of-00010.safetensors +3 -0

- model-00008-of-00010.safetensors +3 -0

- model-00009-of-00010.safetensors +3 -0

- model-00010-of-00010.safetensors +3 -0

- model.safetensors.index.json +291 -0

- modeling_chatglm.py +1138 -0

- tokenization_chatglm.py +322 -0

- tokenizer.model +3 -0

- tokenizer_config.json +134 -0

.gitignore

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*venv

|

| 2 |

+

*.DS_Store

|

| 3 |

+

*.idea/

|

LICENSE

ADDED

|

@@ -0,0 +1,84 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

The glm-4-9b License

|

| 2 |

+

|

| 3 |

+

1. 定义

|

| 4 |

+

|

| 5 |

+

“许可方”是指分发其软件的 glm-4-9b 模型团队。

|

| 6 |

+

“软件”是指根据本许可提供的 glm-4-9b 模型参数。

|

| 7 |

+

|

| 8 |

+

2. 许可授予

|

| 9 |

+

|

| 10 |

+

根据本许可的条款和条件,许可方特此授予您非排他性、全球性、不可转让、不可再许可、可撤销、免版税的版权许可。

|

| 11 |

+

本许可允许您免费使用本仓库中的所有开源模型进行学术研究,对于希望将模型用于商业目的的用户,需在[这里](https://open.bigmodel.cn/mla/form)完成登记。经过登记的用户可以免费使用本模型进行商业活动,但必须遵守本许可的所有条款和条件。

|

| 12 |

+

上述版权声明和本许可声明应包含在本软件的所有副本或重要部分中。

|

| 13 |

+

如果您分发或提供 THUDM / 智谱AI 关于 glm-4 开源模型的材料(或其任何衍生作品),或使用其中任何材料(包括 glm-4 系列的所有开源模型)的产品或服务,您应:

|

| 14 |

+

|

| 15 |

+

(A) 随任何此类 THUDM / 智谱AI 材料提供本协议的副本;

|

| 16 |

+

(B) 在相关网站、用户界面、博客文章、关于页面或产品文档上突出显示 “Built with glm-4”。

|

| 17 |

+

如果您使用 THUDM / 智谱AI的 glm-4 开源模型的材料来创建、训练、微调或以其他方式改进已分发或可用的 AI 模型,您还应在任何此类 AI 模型名称的开头添加 “glm-4”。

|

| 18 |

+

|

| 19 |

+

3. 限制

|

| 20 |

+

|

| 21 |

+

您不得出于任何军事或非法目的使用、复制、修改、合并、发布、分发、复制或创建本软件的全部或部分衍生作品。

|

| 22 |

+

您不得利用本软件从事任何危害国家安全和国家统一,危害社会公共利益及公序良俗,侵犯他人商业秘密、知识产权、名誉权、肖像权、财产权等权益的行为。

|

| 23 |

+

您在使用中应遵循使用地所适用的法律法规政策、道德规范等要求。

|

| 24 |

+

|

| 25 |

+

4. 免责声明

|

| 26 |

+

|

| 27 |

+

本软件“按原样”提供,不提供任何明示或暗示的保证,包括但不限于对适销性、特定用途的适用性和非侵权性的保证。

|

| 28 |

+

在任何情况下,作者或版权持有人均不对任何索赔、损害或其他责任负责,无论是在合同诉讼、侵权行为还是其他方面,由软件或软件的使用或其他交易引起、由软件引起或与之相关

|

| 29 |

+

软件。

|

| 30 |

+

|

| 31 |

+

5. 责任限制

|

| 32 |

+

|

| 33 |

+

除适用法律禁止的范围外,在任何情况下且根据任何法律理论,无论是基于侵权行为、疏忽、合同、责任或其他原因,任何许可方均不对您承担任何直接、间接、特殊、偶然、示范性、

|

| 34 |

+

或间接损害,或任何其他商业损失,即使许可人已被告知此类损害的可能性。

|

| 35 |

+

|

| 36 |

+

6. 争议解决

|

| 37 |

+

|

| 38 |

+

本许可受中华人民共和国法律管辖并按其解释。 因本许可引起的或与本许可有关的任何争议应提交北京市海淀区人民法院。

|

| 39 |

+

请注意,许可证可能会更新到更全面的版本。 有关许可和版权的任何问题,请通过 [email protected] 与我们联系。

|

| 40 |

+

|

| 41 |

+

1. Definitions

|

| 42 |

+

|

| 43 |

+

“Licensor” means the glm-4-9b Model Team that distributes its Software.

|

| 44 |

+

“Software” means the glm-4-9b model parameters made available under this license.

|

| 45 |

+

|

| 46 |

+

2. License

|

| 47 |

+

|

| 48 |

+

Under the terms and conditions of this license, the Licensor hereby grants you a non-exclusive, worldwide, non-transferable, non-sublicensable, revocable, royalty-free copyright license.

|

| 49 |

+

This license allows you to use all open source models in this repository for free for academic research. For users who wish to use the models for commercial purposes, please do so [here](https://open.bigmodel.cn/mla/form)

|

| 50 |

+

Complete registration. Registered users are free to use this model for commercial activities, but must comply with all terms and conditions of this license.

|

| 51 |

+

The copyright notice and this license notice shall be included in all copies or substantial portions of the Software.

|

| 52 |

+

If you distribute or provide THUDM / Zhipu AI materials on the glm-4 open source model (or any derivative works thereof), or products or services that use any materials therein (including all open source models of the glm-4 series), you should:

|

| 53 |

+

|

| 54 |

+

(A) Provide a copy of this Agreement with any such THUDM/Zhipu AI Materials;

|

| 55 |

+

(B) Prominently display "Built with glm-4" on the relevant website, user interface, blog post, related page or product documentation.

|

| 56 |

+

If you use materials from THUDM/Zhipu AI's glm-4 model to create, train, operate, or otherwise improve assigned or available AI models, you should also add "glm-4" to the beginning of any such AI model name.

|

| 57 |

+

|

| 58 |

+

3. Restrictions

|

| 59 |

+

|

| 60 |

+

You are not allowed to use, copy, modify, merge, publish, distribute, copy or create all or part of the derivative works of this software for any military or illegal purposes.

|

| 61 |

+

You are not allowed to use this software to engage in any behavior that endangers national security and unity, endangers social public interests and public order, infringes on the rights and interests of others such as trade secrets, intellectual property rights, reputation rights, portrait rights, and property rights.

|

| 62 |

+

You should comply with the applicable laws, regulations, policies, ethical standards, and other requirements in the place of use during use.

|

| 63 |

+

|

| 64 |

+

4. Disclaimer

|

| 65 |

+

|

| 66 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE

|

| 67 |

+

WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR

|

| 68 |

+

COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR

|

| 69 |

+

OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

| 70 |

+

|

| 71 |

+

5. Limitation of Liability

|

| 72 |

+

|

| 73 |

+

EXCEPT TO THE EXTENT PROHIBITED BY APPLICABLE LAW, IN NO EVENT AND UNDER NO LEGAL THEORY, WHETHER BASED IN TORT,

|

| 74 |

+

NEGLIGENCE, CONTRACT, LIABILITY, OR OTHERWISE WILL ANY LICENSOR BE LIABLE TO YOU FOR ANY DIRECT, INDIRECT, SPECIAL,

|

| 75 |

+

INCIDENTAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES, OR ANY OTHER COMMERCIAL LOSSES, EVEN IF THE LICENSOR HAS BEEN ADVISED

|

| 76 |

+

OF THE POSSIBILITY OF SUCH DAMAGES.

|

| 77 |

+

|

| 78 |

+

6. Dispute Resolution

|

| 79 |

+

|

| 80 |

+

This license shall be governed and construed in accordance with the laws of People’s Republic of China. Any dispute

|

| 81 |

+

arising from or in connection with this License shall be submitted to Haidian District People's Court in Beijing.

|

| 82 |

+

|

| 83 |

+

Note that the license is subject to update to a more comprehensive version. For any questions related to the license and

|

| 84 |

+

copyright, please contact us at [email protected].

|

README.md

CHANGED

|

@@ -1,3 +1,170 @@

|

|

| 1 |

-

---

|

| 2 |

-

license:

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: other

|

| 3 |

+

license_name: glm-4

|

| 4 |

+

license_link: https://huggingface.co/THUDM/glm-4-9b-chat/blob/main/LICENSE

|

| 5 |

+

language:

|

| 6 |

+

- zh

|

| 7 |

+

- en

|

| 8 |

+

tags:

|

| 9 |

+

- glm

|

| 10 |

+

- chatglm

|

| 11 |

+

- thudm

|

| 12 |

+

inference: false

|

| 13 |

+

---

|

| 14 |

+

|

| 15 |

+

# GLM-4-9B-Chat

|

| 16 |

+

|

| 17 |

+

Read this in [English](README_en.md).

|

| 18 |

+

|

| 19 |

+

**2024/08/12, 本仓库代码已更新并使用 `transforemrs>=4.44.0`, 请及时更新依赖。**

|

| 20 |

+

|

| 21 |

+

**2024/07/24,我们发布了与长文本相关的最新技术解读,关注 [这里](https://medium.com/@ChatGLM/glm-long-scaling-pre-trained-model-contexts-to-millions-caa3c48dea85) 查看我们在训练 GLM-4-9B 开源模型中关于长文本技术的技术报告**

|

| 22 |

+

|

| 23 |

+

## 模型介绍

|

| 24 |

+

GLM-4-9B 是智谱 AI 推出的最新一代预训练模型 GLM-4 系列中的开源版本。

|

| 25 |

+

在语义、数学、推理、代码和知识等多方面的数据集测评中,GLM-4-9B 及其人类偏好对齐的版本 GLM-4-9B-Chat 均表现出较高的性能。

|

| 26 |

+

除了能进行多轮对话,GLM-4-9B-Chat 还具备网页浏览、代码执行、自定义工具调用(Function Call)和长文本推理(支持最大 128K

|

| 27 |

+

上下文)等高级功能。

|

| 28 |

+

本代模型增加了多语言支持,支持包括日语,韩语,德语在内的 26 种语言。我们还推出了支持 1M 上下文长度(约 200 万中文字符)的模型。

|

| 29 |

+

|

| 30 |

+

## 评测结果

|

| 31 |

+

|

| 32 |

+

我们在一些经典任务上对 GLM-4-9B-Chat 模型进行了评测,并得到了如下的结果:

|

| 33 |

+

|

| 34 |

+

| Model | AlignBench-v2 | MT-Bench | IFEval | MMLU | C-Eval | GSM8K | MATH | HumanEval | NCB |

|

| 35 |

+

|:--------------------|:-------------:|:--------:|:------:|:----:|:------:|:-----:|:----:|:---------:|:----:|

|

| 36 |

+

| Llama-3-8B-Instruct | 5.12 | 8.00 | 68.58 | 68.4 | 51.3 | 79.6 | 30.0 | 62.2 | 24.7 |

|

| 37 |

+

| ChatGLM3-6B | 3.97 | 5.50 | 28.1 | 66.4 | 69.0 | 72.3 | 25.7 | 58.5 | 11.3 |

|

| 38 |

+

| GLM-4-9B-Chat | 6.61 | 8.35 | 69.0 | 72.4 | 75.6 | 79.6 | 50.6 | 71.8 | 32.2 |

|

| 39 |

+

|

| 40 |

+

### 长文本

|

| 41 |

+

|

| 42 |

+

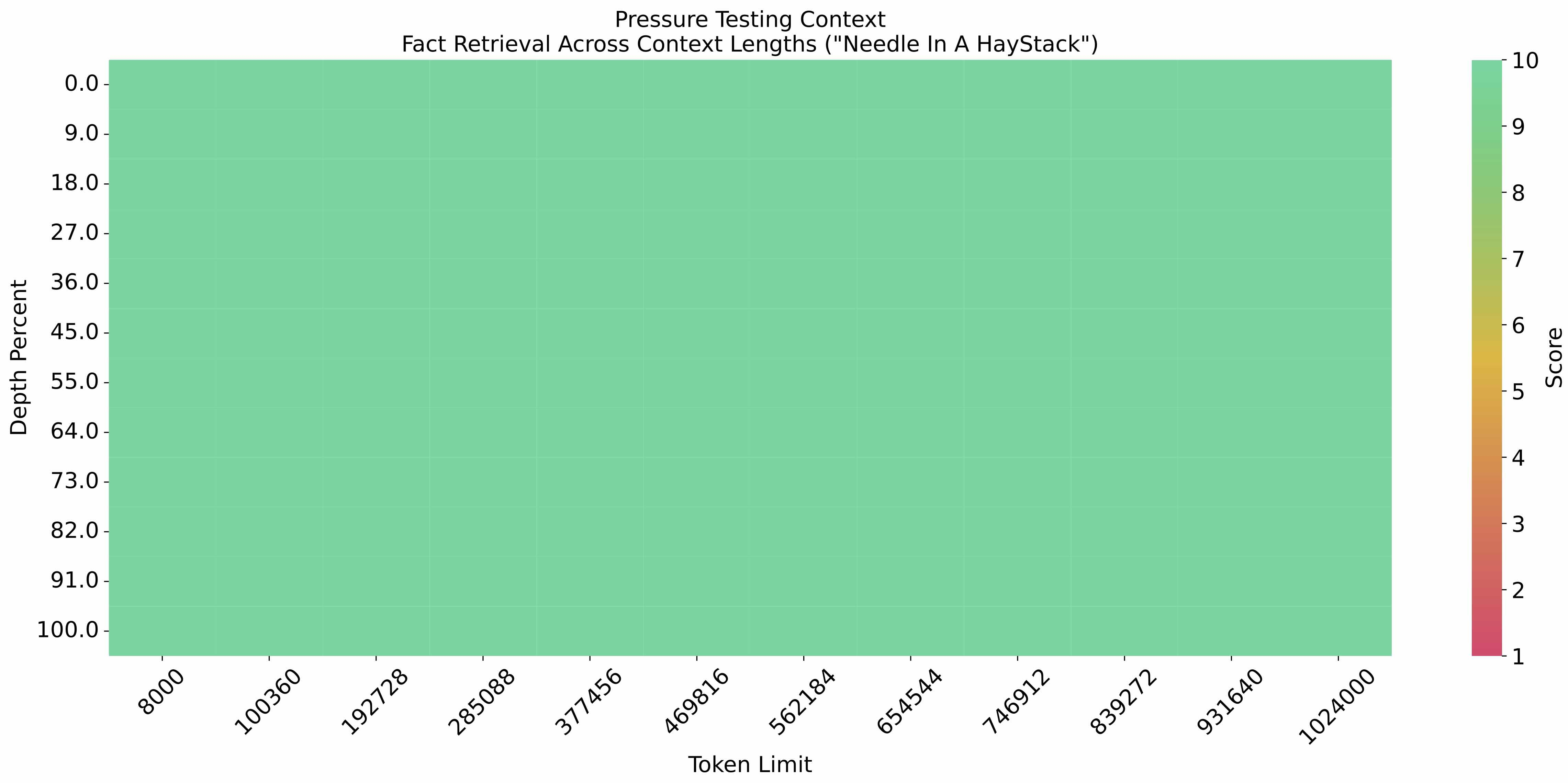

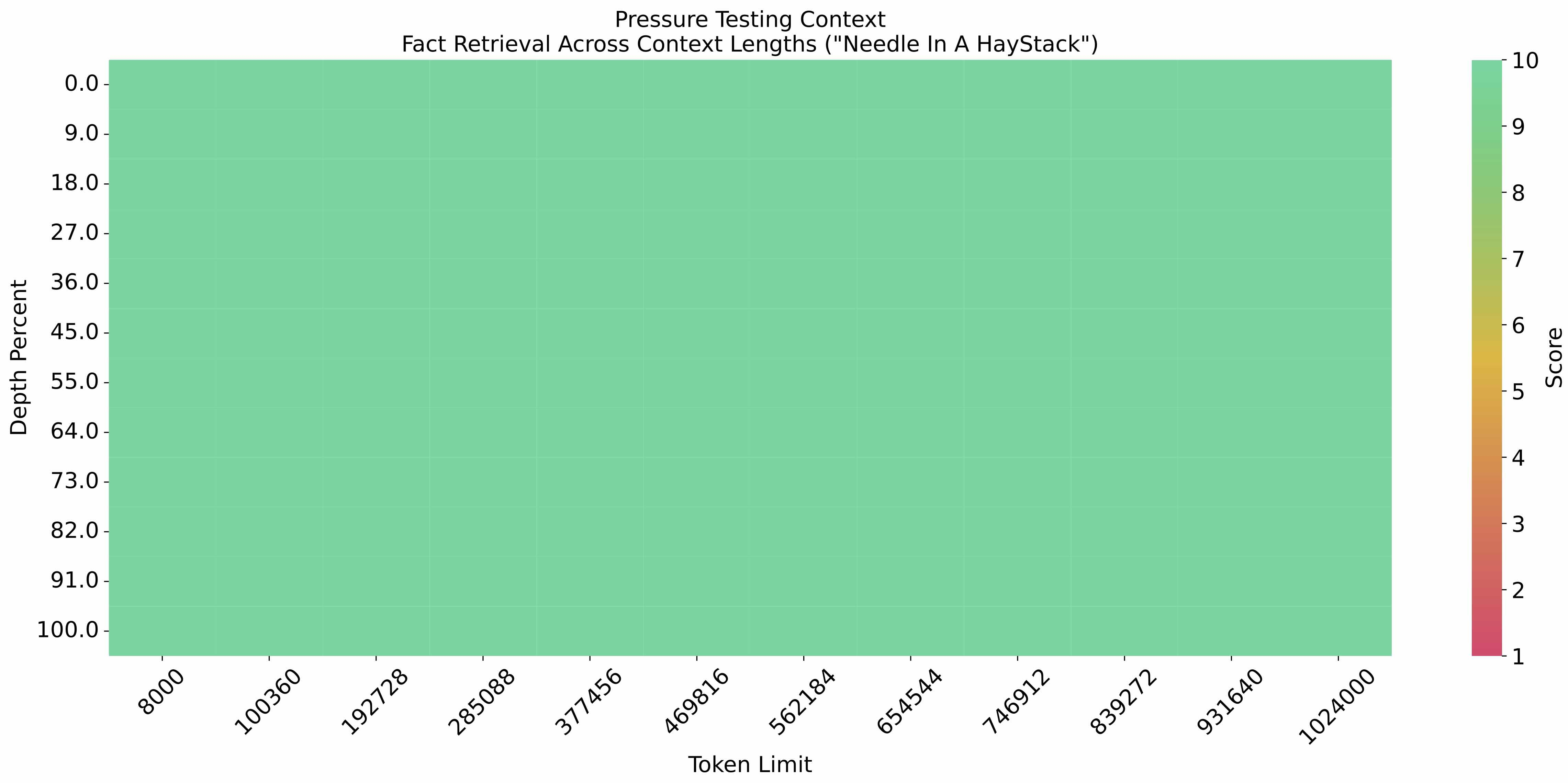

在 1M 的上下文长度下进行[大海捞针实验](https://github.com/LargeWorldModel/LWM/blob/main/scripts/eval_needle.py),结果如下:

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

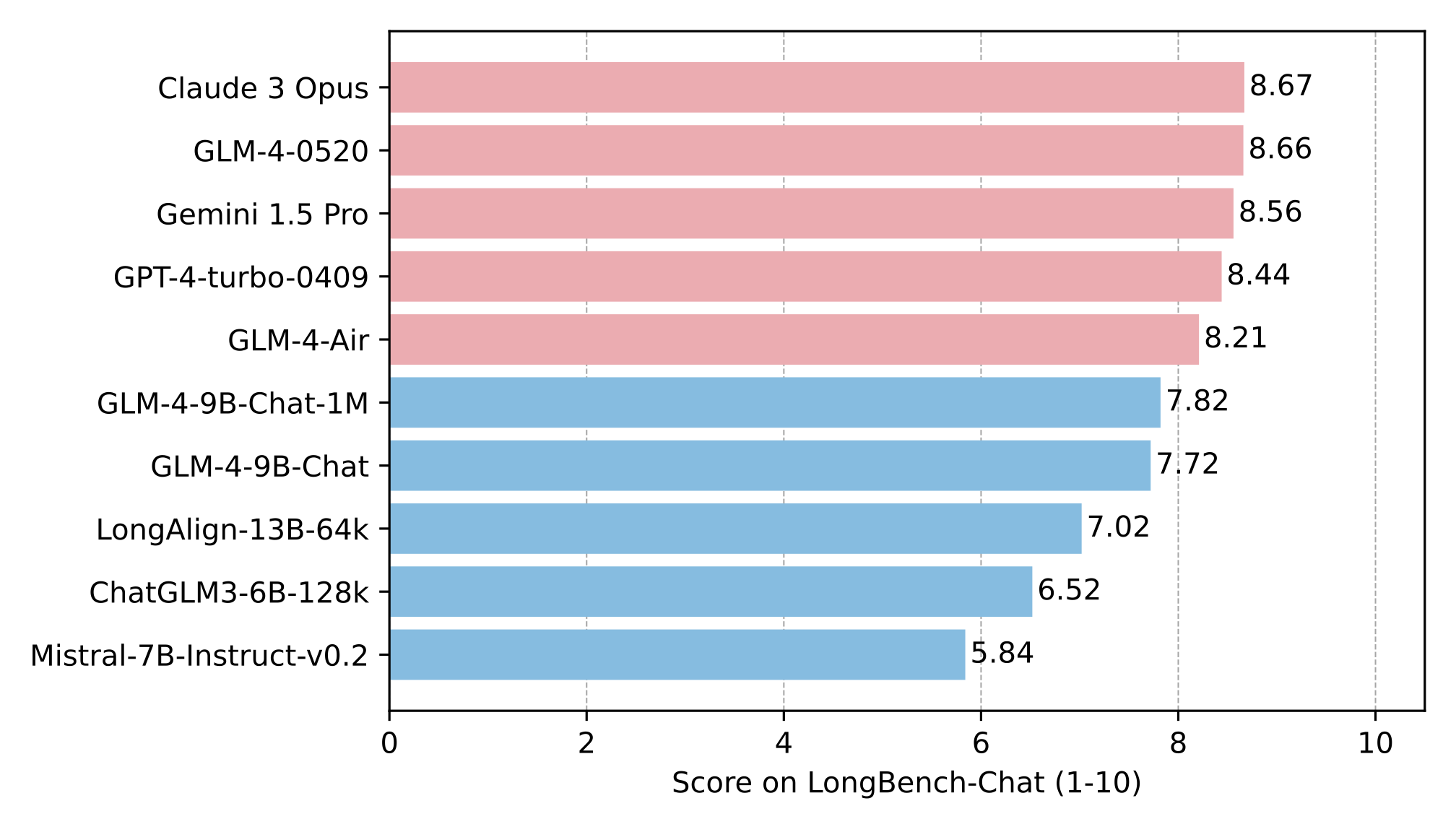

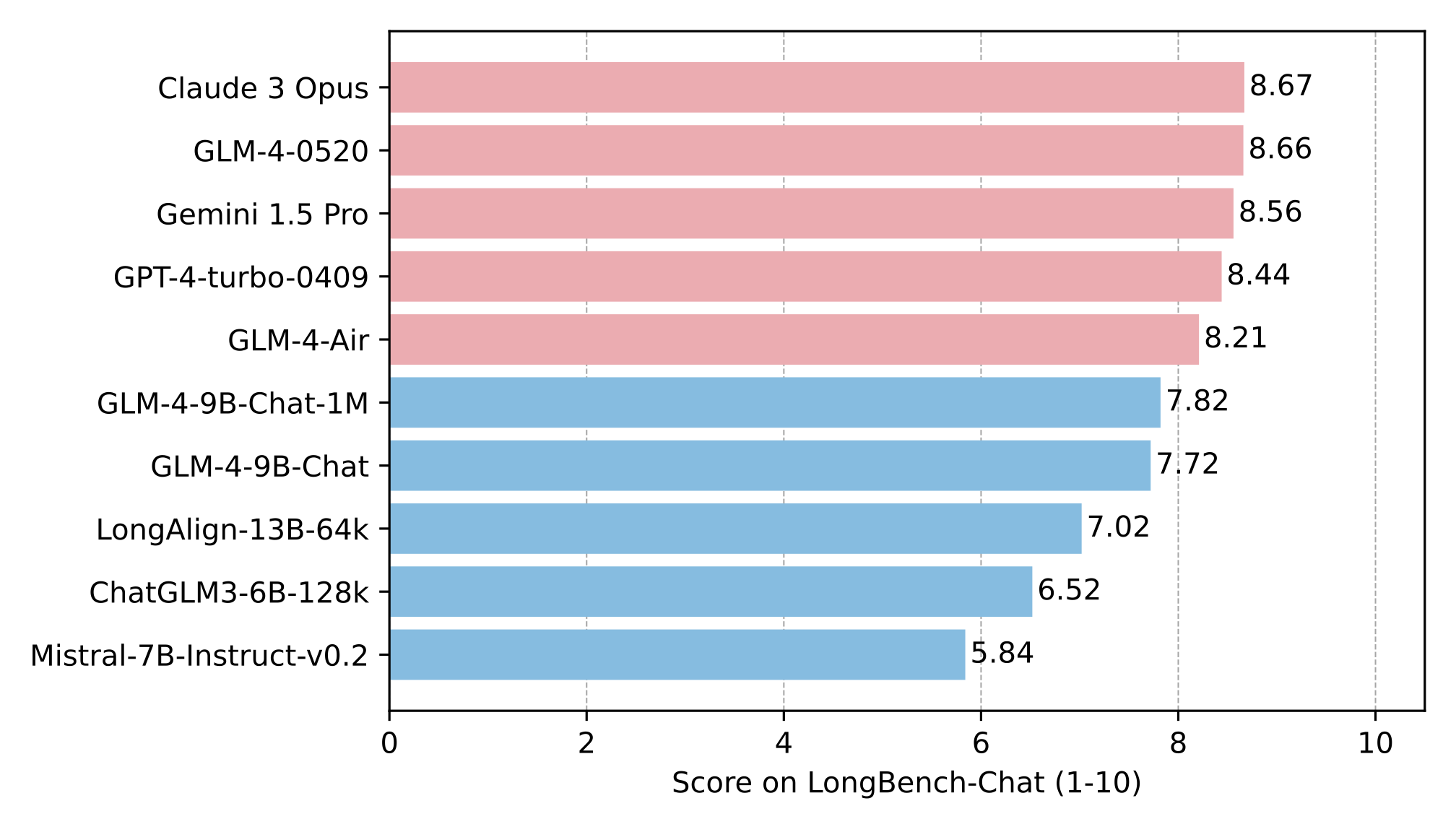

在 LongBench-Chat 上对长文本能力进行了进一步评测,结果如下:

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

|

| 50 |

+

### 多语言能力

|

| 51 |

+

|

| 52 |

+

在六个多语言数据集上对 GLM-4-9B-Chat 和 Llama-3-8B-Instruct 进行了测试,测试结果及数据集对应选取语言如下表

|

| 53 |

+

|

| 54 |

+

| Dataset | Llama-3-8B-Instruct | GLM-4-9B-Chat | Languages

|

| 55 |

+

|:------------|:-------------------:|:-------------:|:----------------------------------------------------------------------------------------------:|

|

| 56 |

+

| M-MMLU | 49.6 | 56.6 | all

|

| 57 |

+

| FLORES | 25.0 | 28.8 | ru, es, de, fr, it, pt, pl, ja, nl, ar, tr, cs, vi, fa, hu, el, ro, sv, uk, fi, ko, da, bg, no

|

| 58 |

+

| MGSM | 54.0 | 65.3 | zh, en, bn, de, es, fr, ja, ru, sw, te, th

|

| 59 |

+

| XWinograd | 61.7 | 73.1 | zh, en, fr, jp, ru, pt

|

| 60 |

+

| XStoryCloze | 84.7 | 90.7 | zh, en, ar, es, eu, hi, id, my, ru, sw, te

|

| 61 |

+

| XCOPA | 73.3 | 80.1 | zh, et, ht, id, it, qu, sw, ta, th, tr, vi

|

| 62 |

+

|

| 63 |

+

### 工具调用能力

|

| 64 |

+

|

| 65 |

+

我们在 [Berkeley Function Calling Leaderboard](https://github.com/ShishirPatil/gorilla/tree/main/berkeley-function-call-leaderboard)

|

| 66 |

+

上进行了测试并得到了以下结果:

|

| 67 |

+

|

| 68 |

+

| Model | Overall Acc. | AST Summary | Exec Summary | Relevance |

|

| 69 |

+

|:-----------------------|:------------:|:-----------:|:------------:|:---------:|

|

| 70 |

+

| Llama-3-8B-Instruct | 58.88 | 59.25 | 70.01 | 45.83 |

|

| 71 |

+

| gpt-4-turbo-2024-04-09 | 81.24 | 82.14 | 78.61 | 88.75 |

|

| 72 |

+

| ChatGLM3-6B | 57.88 | 62.18 | 69.78 | 5.42 |

|

| 73 |

+

| GLM-4-9B-Chat | 81.00 | 80.26 | 84.40 | 87.92 |

|

| 74 |

+

|

| 75 |

+

**本仓库是 GLM-4-9B-Chat 的模型仓库,支持`128K`上下文长度。**

|

| 76 |

+

|

| 77 |

+

## 运行模型

|

| 78 |

+

|

| 79 |

+

**更多推理代码和依赖信息,请访问我们的 [github](https://github.com/THUDM/GLM-4)。**

|

| 80 |

+

|

| 81 |

+

**请严格按照[依赖](https://github.com/THUDM/GLM-4/blob/main/basic_demo/requirements.txt)安装,否则无法正常运行。**

|

| 82 |

+

|

| 83 |

+

### 使用 transformers 后端进行推理:

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

```python

|

| 87 |

+

import torch

|

| 88 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 89 |

+

|

| 90 |

+

device = "cuda"

|

| 91 |

+

|

| 92 |

+

tokenizer = AutoTokenizer.from_pretrained("THUDM/glm-4-9b-chat", trust_remote_code=True)

|

| 93 |

+

|

| 94 |

+

query = "你好"

|

| 95 |

+

|

| 96 |

+

inputs = tokenizer.apply_chat_template([{"role": "user", "content": query}],

|

| 97 |

+

add_generation_prompt=True,

|

| 98 |

+

tokenize=True,

|

| 99 |

+

return_tensors="pt",

|

| 100 |

+

return_dict=True

|

| 101 |

+

)

|

| 102 |

+

|

| 103 |

+

inputs = inputs.to(device)

|

| 104 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 105 |

+

"THUDM/glm-4-9b-chat",

|

| 106 |

+

torch_dtype=torch.bfloat16,

|

| 107 |

+

low_cpu_mem_usage=True,

|

| 108 |

+

trust_remote_code=True

|

| 109 |

+

).to(device).eval()

|

| 110 |

+

|

| 111 |

+

gen_kwargs = {"max_length": 2500, "do_sample": True, "top_k": 1}

|

| 112 |

+

with torch.no_grad():

|

| 113 |

+

outputs = model.generate(**inputs, **gen_kwargs)

|

| 114 |

+

outputs = outputs[:, inputs['input_ids'].shape[1]:]

|

| 115 |

+

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

|

| 116 |

+

```

|

| 117 |

+

|

| 118 |

+

使用 vLLM后端进行推理:

|

| 119 |

+

|

| 120 |

+

```python

|

| 121 |

+

from transformers import AutoTokenizer

|

| 122 |

+

from vllm import LLM, SamplingParams

|

| 123 |

+

|

| 124 |

+

# GLM-4-9B-Chat-1M

|

| 125 |

+

# max_model_len, tp_size = 1048576, 4

|

| 126 |

+

|

| 127 |

+

# GLM-4-9B-Chat

|

| 128 |

+

# 如果遇见 OOM 现象,建议减少max_model_len,或者增加tp_size

|

| 129 |

+

max_model_len, tp_size = 131072, 1

|

| 130 |

+

model_name = "THUDM/glm-4-9b-chat"

|

| 131 |

+

prompt = [{"role": "user", "content": "你好"}]

|

| 132 |

+

|

| 133 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name, trust_remote_code=True)

|

| 134 |

+

llm = LLM(

|

| 135 |

+

model=model_name,

|

| 136 |

+

tensor_parallel_size=tp_size,

|

| 137 |

+

max_model_len=max_model_len,

|

| 138 |

+

trust_remote_code=True,

|

| 139 |

+

enforce_eager=True,

|

| 140 |

+

# GLM-4-9B-Chat-1M 如果遇见 OOM 现象,建议开启下述参数

|

| 141 |

+

# enable_chunked_prefill=True,

|

| 142 |

+

# max_num_batched_tokens=8192

|

| 143 |

+

)

|

| 144 |

+

stop_token_ids = [151329, 151336, 151338]

|

| 145 |

+

sampling_params = SamplingParams(temperature=0.95, max_tokens=1024, stop_token_ids=stop_token_ids)

|

| 146 |

+

|

| 147 |

+

inputs = tokenizer.apply_chat_template(prompt, tokenize=False, add_generation_prompt=True)

|

| 148 |

+

outputs = llm.generate(prompts=inputs, sampling_params=sampling_params)

|

| 149 |

+

|

| 150 |

+

print(outputs[0].outputs[0].text)

|

| 151 |

+

```

|

| 152 |

+

|

| 153 |

+

## 协议

|

| 154 |

+

|

| 155 |

+

GLM-4 模型的权重的使用则需要遵循 [LICENSE](LICENSE)。

|

| 156 |

+

|

| 157 |

+

## 引用

|

| 158 |

+

|

| 159 |

+

如果你觉得我们的工作有帮助的话,请考虑引用下列论文。

|

| 160 |

+

|

| 161 |

+

```

|

| 162 |

+

@misc{glm2024chatglm,

|

| 163 |

+

title={ChatGLM: A Family of Large Language Models from GLM-130B to GLM-4 All Tools},

|

| 164 |

+

author={Team GLM and Aohan Zeng and Bin Xu and Bowen Wang and Chenhui Zhang and Da Yin and Diego Rojas and Guanyu Feng and Hanlin Zhao and Hanyu Lai and Hao Yu and Hongning Wang and Jiadai Sun and Jiajie Zhang and Jiale Cheng and Jiayi Gui and Jie Tang and Jing Zhang and Juanzi Li and Lei Zhao and Lindong Wu and Lucen Zhong and Mingdao Liu and Minlie Huang and Peng Zhang and Qinkai Zheng and Rui Lu and Shuaiqi Duan and Shudan Zhang and Shulin Cao and Shuxun Yang and Weng Lam Tam and Wenyi Zhao and Xiao Liu and Xiao Xia and Xiaohan Zhang and Xiaotao Gu and Xin Lv and Xinghan Liu and Xinyi Liu and Xinyue Yang and Xixuan Song and Xunkai Zhang and Yifan An and Yifan Xu and Yilin Niu and Yuantao Yang and Yueyan Li and Yushi Bai and Yuxiao Dong and Zehan Qi and Zhaoyu Wang and Zhen Yang and Zhengxiao Du and Zhenyu Hou and Zihan Wang},

|

| 165 |

+

year={2024},

|

| 166 |

+

eprint={2406.12793},

|

| 167 |

+

archivePrefix={arXiv},

|

| 168 |

+

primaryClass={id='cs.CL' full_name='Computation and Language' is_active=True alt_name='cmp-lg' in_archive='cs' is_general=False description='Covers natural language processing. Roughly includes material in ACM Subject Class I.2.7. Note that work on artificial languages (programming languages, logics, formal systems) that does not explicitly address natural-language issues broadly construed (natural-language processing, computational linguistics, speech, text retrieval, etc.) is not appropriate for this area.'}

|

| 169 |

+

}

|

| 170 |

+

```

|

README_en.md

ADDED

|

@@ -0,0 +1,167 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# GLM-4-9B-Chat

|

| 2 |

+

|

| 3 |

+

**2024/08/12, The repository code has been updated and now requires `transformers>=4.44.0`. Please update your dependencies accordingly.**

|

| 4 |

+

|

| 5 |

+

**On July 24, 2024, we released the latest technical interpretation related to long texts. Check

|

| 6 |

+

out [here](https://medium.com/@ChatGLM/glm-long-scaling-pre-trained-model-contexts-to-millions-caa3c48dea85) to view our

|

| 7 |

+

technical report on long context technology in the training of the open-source GLM-4-9B model.**

|

| 8 |

+

|

| 9 |

+

## Model Introduction

|

| 10 |

+

|

| 11 |

+

GLM-4-9B is the open-source version of the latest generation of pre-trained models in the GLM-4 series launched by Zhipu

|

| 12 |

+

AI. In the evaluation of data sets in semantics, mathematics, reasoning, code, and knowledge, **GLM-4-9B**

|

| 13 |

+

and its human preference-aligned version **GLM-4-9B-Chat** have shown superior performance beyond Llama-3-8B. In

|

| 14 |

+

addition to multi-round conversations, GLM-4-9B-Chat also has advanced features such as web browsing, code execution,

|

| 15 |

+

custom tool calls (Function Call), and long context

|

| 16 |

+

reasoning (supporting up to 128K context). This generation of models has added multi-language support, supporting 26

|

| 17 |

+

languages including Japanese, Korean, and German. We have also launched the **GLM-4-9B-Chat-1M** model that supports 1M

|

| 18 |

+

context length (about 2 million Chinese characters) and the multimodal model GLM-4V-9B based on GLM-4-9B.

|

| 19 |

+

**GLM-4V-9B** possesses dialogue capabilities in both Chinese and English at a high resolution of 1120*1120.

|

| 20 |

+

In various multimodal evaluations, including comprehensive abilities in Chinese and English, perception & reasoning,

|

| 21 |

+

text recognition, and chart understanding, GLM-4V-9B demonstrates superior performance compared to

|

| 22 |

+

GPT-4-turbo-2024-04-09, Gemini 1.0 Pro, Qwen-VL-Max, and Claude 3 Opus.

|

| 23 |

+

|

| 24 |

+

## Benchmark

|

| 25 |

+

|

| 26 |

+

We evaluated the GLM-4-9B-Chat model on some classic tasks and obtained the following results:

|

| 27 |

+

|

| 28 |

+

| Model | AlignBench-v2 | MT-Bench | IFEval | MMLU | C-Eval | GSM8K | MATH | HumanEval | NCB |

|

| 29 |

+

|:--------------------|:-------------:|:--------:|:------:|:----:|:------:|:-----:|:----:|:---------:|:----:|

|

| 30 |

+

| Llama-3-8B-Instruct | 5.12 | 8.00 | 68.58 | 68.4 | 51.3 | 79.6 | 30.0 | 62.2 | 24.7 |

|

| 31 |

+

| ChatGLM3-6B | 3.97 | 5.50 | 28.1 | 66.4 | 69.0 | 72.3 | 25.7 | 58.5 | 11.3 |

|

| 32 |

+

| GLM-4-9B-Chat | 6.61 | 8.35 | 69.0 | 72.4 | 75.6 | 79.6 | 50.6 | 71.8 | 32.2 |

|

| 33 |

+

|

| 34 |

+

### Long Context

|

| 35 |

+

|

| 36 |

+

The [eval_needle experiment](https://github.com/LargeWorldModel/LWM/blob/main/scripts/eval_needle.py) was conducted with

|

| 37 |

+

a context length of 1M, and the results are as follows:

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

The long text capability was further evaluated on LongBench, and the results are as follows:

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

### Multi Language

|

| 46 |

+

|

| 47 |

+

The tests for GLM-4-9B-Chat and Llama-3-8B-Instruct are conducted on six multilingual datasets. The test results and the

|

| 48 |

+

corresponding languages selected for each dataset are shown in the table below:

|

| 49 |

+

|

| 50 |

+

| Dataset | Llama-3-8B-Instruct | GLM-4-9B-Chat | Languages |

|

| 51 |

+

|:------------|:-------------------:|:-------------:|:----------------------------------------------------------------------------------------------:|

|

| 52 |

+

| M-MMLU | 49.6 | 56.6 | all |

|

| 53 |

+

| FLORES | 25.0 | 28.8 | ru, es, de, fr, it, pt, pl, ja, nl, ar, tr, cs, vi, fa, hu, el, ro, sv, uk, fi, ko, da, bg, no |

|

| 54 |

+

| MGSM | 54.0 | 65.3 | zh, en, bn, de, es, fr, ja, ru, sw, te, th |

|

| 55 |

+

| XWinograd | 61.7 | 73.1 | zh, en, fr, jp, ru, pt |

|

| 56 |

+

| XStoryCloze | 84.7 | 90.7 | zh, en, ar, es, eu, hi, id, my, ru, sw, te |

|

| 57 |

+

| XCOPA | 73.3 | 80.1 | zh, et, ht, id, it, qu, sw, ta, th, tr, vi |

|

| 58 |

+

|

| 59 |

+

### Function Call

|

| 60 |

+

|

| 61 |

+

Tested

|

| 62 |

+

on [Berkeley Function Calling Leaderboard](https://github.com/ShishirPatil/gorilla/tree/main/berkeley-function-call-leaderboard).

|

| 63 |

+

|

| 64 |

+

| Model | Overall Acc. | AST Summary | Exec Summary | Relevance |

|

| 65 |

+

|:-----------------------|:------------:|:-----------:|:------------:|:---------:|

|

| 66 |

+

| Llama-3-8B-Instruct | 58.88 | 59.25 | 70.01 | 45.83 |

|

| 67 |

+

| gpt-4-turbo-2024-04-09 | 81.24 | 82.14 | 78.61 | 88.75 |

|

| 68 |

+

| ChatGLM3-6B | 57.88 | 62.18 | 69.78 | 5.42 |

|

| 69 |

+

| GLM-4-9B-Chat | 81.00 | 80.26 | 84.40 | 87.92 |

|

| 70 |

+

|

| 71 |

+

**This repository is the model repository of GLM-4-9B-Chat, supporting `128K` context length.**

|

| 72 |

+

|

| 73 |

+

## Quick Start

|

| 74 |

+

|

| 75 |

+

**For more inference code and requirements, please visit our [github page](https://github.com/THUDM/GLM-4).**

|

| 76 |

+

|

| 77 |

+

**Please strictly follow the [dependencies](https://github.com/THUDM/GLM-4/blob/main/basic_demo/requirements.txt) to

|

| 78 |

+

install, otherwise it will not run properly**

|

| 79 |

+

|

| 80 |

+

### Use the following method to quickly call the GLM-4-9B-Chat language model

|

| 81 |

+

|

| 82 |

+

Use the transformers backend for inference:

|

| 83 |

+

|

| 84 |

+

```python

|

| 85 |

+

import torch

|

| 86 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 87 |

+

|

| 88 |

+

device = "cuda"

|

| 89 |

+

|

| 90 |

+

tokenizer = AutoTokenizer.from_pretrained("THUDM/glm-4-9b-chat", trust_remote_code=True)

|

| 91 |

+

|

| 92 |

+

query = "Hello"

|

| 93 |

+

|

| 94 |

+

inputs = tokenizer.apply_chat_template([{"role": "user", "content": query}],

|

| 95 |

+

add_generation_prompt=True,

|

| 96 |

+

tokenize=True,

|

| 97 |

+

return_tensors="pt",

|

| 98 |

+

return_dict=True

|

| 99 |

+

)

|

| 100 |

+

|

| 101 |

+

inputs = inputs.to(device)

|

| 102 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 103 |

+

"THUDM/glm-4-9b-chat",

|

| 104 |

+

torch_dtype=torch.bfloat16,

|

| 105 |

+

low_cpu_mem_usage=True,

|

| 106 |

+

trust_remote_code=True

|

| 107 |

+

).to(device).eval()

|

| 108 |

+

|

| 109 |

+

gen_kwargs = {"max_length": 2500, "do_sample": True, "top_k": 1}

|

| 110 |

+

with torch.no_grad():

|

| 111 |

+

outputs = model.generate(**inputs, **gen_kwargs)

|

| 112 |

+

outputs = outputs[:, inputs['input_ids'].shape[1]:]

|

| 113 |

+

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

|

| 114 |

+

```

|

| 115 |

+

|

| 116 |

+

Use the vLLM backend for inference:

|

| 117 |

+

|

| 118 |

+

```python

|

| 119 |

+

from transformers import AutoTokenizer

|

| 120 |

+

from vllm import LLM, SamplingParams

|

| 121 |

+

|

| 122 |

+

# GLM-4-9B-Chat-1M

|

| 123 |

+

# max_model_len, tp_size = 1048576, 4

|

| 124 |

+

|

| 125 |

+

# GLM-4-9B-Chat

|

| 126 |

+

# If you encounter OOM, it is recommended to reduce max_model_len or increase tp_size

|

| 127 |

+

max_model_len, tp_size = 131072, 1

|

| 128 |

+

model_name = "THUDM/glm-4-9b-chat"

|

| 129 |

+

prompt = [{"role": "user", "content": "hello"}]

|

| 130 |

+

|

| 131 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name, trust_remote_code=True)

|

| 132 |

+

llm = LLM(

|

| 133 |

+

model=model_name,

|

| 134 |

+

tensor_parallel_size=tp_size,

|

| 135 |

+

max_model_len=max_model_len,

|

| 136 |

+

trust_remote_code=True,

|

| 137 |

+

enforce_eager=True,

|

| 138 |

+

# GLM-4-9B-Chat-1M If you encounter OOM phenomenon, it is recommended to enable the following parameters

|

| 139 |

+

# enable_chunked_prefill=True,

|

| 140 |

+

# max_num_batched_tokens=8192

|

| 141 |

+

)

|

| 142 |

+

stop_token_ids = [151329, 151336, 151338]

|

| 143 |

+

sampling_params = SamplingParams(temperature=0.95, max_tokens=1024, stop_token_ids=stop_token_ids)

|

| 144 |

+

|

| 145 |

+

inputs = tokenizer.apply_chat_template(prompt, tokenize=False, add_generation_prompt=True)

|

| 146 |

+

outputs = llm.generate(prompts=inputs, sampling_params=sampling_params)

|

| 147 |

+

print(outputs[0].outputs[0].text)

|

| 148 |

+

```

|

| 149 |

+

|

| 150 |

+

## LICENSE

|

| 151 |

+

|

| 152 |

+

The weights of the GLM-4 model are available under the terms of [LICENSE](LICENSE).

|

| 153 |

+

|

| 154 |

+

## Citations

|

| 155 |

+

|

| 156 |

+

If you find our work useful, please consider citing the following paper.

|

| 157 |

+

|

| 158 |

+

```

|

| 159 |

+

@misc{glm2024chatglm,

|

| 160 |

+

title={ChatGLM: A Family of Large Language Models from GLM-130B to GLM-4 All Tools},

|

| 161 |

+

author={Team GLM and Aohan Zeng and Bin Xu and Bowen Wang and Chenhui Zhang and Da Yin and Diego Rojas and Guanyu Feng and Hanlin Zhao and Hanyu Lai and Hao Yu and Hongning Wang and Jiadai Sun and Jiajie Zhang and Jiale Cheng and Jiayi Gui and Jie Tang and Jing Zhang and Juanzi Li and Lei Zhao and Lindong Wu and Lucen Zhong and Mingdao Liu and Minlie Huang and Peng Zhang and Qinkai Zheng and Rui Lu and Shuaiqi Duan and Shudan Zhang and Shulin Cao and Shuxun Yang and Weng Lam Tam and Wenyi Zhao and Xiao Liu and Xiao Xia and Xiaohan Zhang and Xiaotao Gu and Xin Lv and Xinghan Liu and Xinyi Liu and Xinyue Yang and Xixuan Song and Xunkai Zhang and Yifan An and Yifan Xu and Yilin Niu and Yuantao Yang and Yueyan Li and Yushi Bai and Yuxiao Dong and Zehan Qi and Zhaoyu Wang and Zhen Yang and Zhengxiao Du and Zhenyu Hou and Zihan Wang},

|

| 162 |

+

year={2024},

|

| 163 |

+

eprint={2406.12793},

|

| 164 |

+

archivePrefix={arXiv},

|

| 165 |

+

primaryClass={id='cs.CL' full_name='Computation and Language' is_active=True alt_name='cmp-lg' in_archive='cs' is_general=False description='Covers natural language processing. Roughly includes material in ACM Subject Class I.2.7. Note that work on artificial languages (programming languages, logics, formal systems) that does not explicitly address natural-language issues broadly construed (natural-language processing, computational linguistics, speech, text retrieval, etc.) is not appropriate for this area.'}

|

| 166 |

+

}

|

| 167 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,45 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "THUDM/glm-4-9b-chat",

|

| 3 |

+

"model_type": "chatglm",

|

| 4 |

+

"architectures": [

|

| 5 |

+

"ChatGLMModel"

|

| 6 |

+

],

|

| 7 |

+

"auto_map": {

|

| 8 |

+

"AutoConfig": "configuration_chatglm.ChatGLMConfig",

|

| 9 |

+

"AutoModel": "modeling_chatglm.ChatGLMForConditionalGeneration",

|

| 10 |

+

"AutoModelForCausalLM": "modeling_chatglm.ChatGLMForConditionalGeneration",

|

| 11 |

+

"AutoModelForSeq2SeqLM": "modeling_chatglm.ChatGLMForConditionalGeneration",

|

| 12 |

+

"AutoModelForSequenceClassification": "modeling_chatglm.ChatGLMForSequenceClassification"

|

| 13 |

+

},

|

| 14 |

+

"add_bias_linear": false,

|

| 15 |

+

"add_qkv_bias": true,

|

| 16 |

+

"apply_query_key_layer_scaling": true,

|

| 17 |

+

"apply_residual_connection_post_layernorm": false,

|

| 18 |

+

"attention_dropout": 0.0,

|

| 19 |

+

"attention_softmax_in_fp32": true,

|

| 20 |

+

"attn_implementation": "sdpa",

|

| 21 |

+

"bias_dropout_fusion": true,

|

| 22 |

+

"ffn_hidden_size": 13696,

|

| 23 |

+

"fp32_residual_connection": false,

|

| 24 |

+

"hidden_dropout": 0.0,

|

| 25 |

+

"hidden_size": 4096,

|

| 26 |

+

"kv_channels": 128,

|

| 27 |

+

"layernorm_epsilon": 1.5625e-07,

|

| 28 |

+

"multi_query_attention": true,

|

| 29 |

+

"multi_query_group_num": 2,

|

| 30 |

+

"num_attention_heads": 32,

|

| 31 |

+

"num_hidden_layers": 40,

|

| 32 |

+

"num_layers": 40,

|

| 33 |

+

"rope_ratio": 500,

|

| 34 |

+

"original_rope": true,

|

| 35 |

+

"padded_vocab_size": 151552,

|

| 36 |

+

"post_layer_norm": true,

|

| 37 |

+

"rmsnorm": true,

|

| 38 |

+

"seq_length": 131072,

|

| 39 |

+

"use_cache": true,

|

| 40 |

+

"torch_dtype": "bfloat16",

|

| 41 |

+

"transformers_version": "4.44.0",

|

| 42 |

+

"tie_word_embeddings": false,

|

| 43 |

+

"eos_token_id": [151329, 151336, 151338],

|

| 44 |

+

"pad_token_id": 151329

|

| 45 |

+

}

|

configuration.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"framework":"Pytorch","task":"nli"}

|

configuration_chatglm.py

ADDED

|

@@ -0,0 +1,58 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from transformers import PretrainedConfig

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

class ChatGLMConfig(PretrainedConfig):

|

| 5 |

+

model_type = "chatglm"

|

| 6 |

+

|

| 7 |

+

def __init__(

|

| 8 |

+

self,

|

| 9 |

+

num_layers=28,

|

| 10 |

+

padded_vocab_size=65024,

|

| 11 |

+

hidden_size=4096,

|

| 12 |

+

ffn_hidden_size=13696,

|

| 13 |

+

kv_channels=128,

|

| 14 |

+

num_attention_heads=32,

|

| 15 |

+

seq_length=2048,

|

| 16 |

+

hidden_dropout=0.0,

|

| 17 |

+

classifier_dropout=None,

|

| 18 |

+

attention_dropout=0.0,

|

| 19 |

+

layernorm_epsilon=1e-5,

|

| 20 |

+

rmsnorm=True,

|

| 21 |

+

apply_residual_connection_post_layernorm=False,

|

| 22 |

+

post_layer_norm=True,

|

| 23 |

+

add_bias_linear=False,

|

| 24 |

+

add_qkv_bias=False,

|

| 25 |

+

bias_dropout_fusion=True,

|

| 26 |

+

multi_query_attention=False,

|

| 27 |

+

multi_query_group_num=1,

|

| 28 |

+

rope_ratio=1,

|

| 29 |

+

apply_query_key_layer_scaling=True,

|

| 30 |

+

attention_softmax_in_fp32=True,

|

| 31 |

+

fp32_residual_connection=False,

|

| 32 |

+

**kwargs

|

| 33 |

+

):

|

| 34 |

+

self.num_layers = num_layers

|

| 35 |

+

self.vocab_size = padded_vocab_size

|

| 36 |

+

self.padded_vocab_size = padded_vocab_size

|

| 37 |

+

self.hidden_size = hidden_size

|

| 38 |

+

self.ffn_hidden_size = ffn_hidden_size

|

| 39 |

+

self.kv_channels = kv_channels

|

| 40 |

+

self.num_attention_heads = num_attention_heads

|

| 41 |

+

self.seq_length = seq_length

|

| 42 |

+

self.hidden_dropout = hidden_dropout

|

| 43 |

+

self.classifier_dropout = classifier_dropout

|

| 44 |

+

self.attention_dropout = attention_dropout

|

| 45 |

+

self.layernorm_epsilon = layernorm_epsilon

|

| 46 |

+

self.rmsnorm = rmsnorm

|

| 47 |

+

self.apply_residual_connection_post_layernorm = apply_residual_connection_post_layernorm

|

| 48 |

+

self.post_layer_norm = post_layer_norm

|

| 49 |

+

self.add_bias_linear = add_bias_linear

|

| 50 |

+

self.add_qkv_bias = add_qkv_bias

|

| 51 |

+

self.bias_dropout_fusion = bias_dropout_fusion

|

| 52 |

+

self.multi_query_attention = multi_query_attention

|

| 53 |

+

self.multi_query_group_num = multi_query_group_num

|

| 54 |

+

self.rope_ratio = rope_ratio

|

| 55 |

+

self.apply_query_key_layer_scaling = apply_query_key_layer_scaling

|

| 56 |

+

self.attention_softmax_in_fp32 = attention_softmax_in_fp32

|

| 57 |

+

self.fp32_residual_connection = fp32_residual_connection

|

| 58 |

+

super().__init__(**kwargs)

|

generation_config.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"eos_token_id": [

|

| 3 |

+

151329,

|

| 4 |

+

151336,

|

| 5 |

+

151338

|

| 6 |

+

],

|

| 7 |

+

"pad_token_id": 151329,

|

| 8 |

+

"do_sample": true,

|

| 9 |

+

"temperature": 0.8,

|

| 10 |

+

"max_length": 128000,

|

| 11 |

+

"top_p": 0.8,

|

| 12 |

+

"transformers_version": "4.44.0"

|

| 13 |

+

}

|

model-00001-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f4109636b2486ad54e150e68be254534971f1d0ce7464b27b56cf77521aa6da9

|

| 3 |

+

size 1945161760

|

model-00002-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:584b0f3e097a8ef5fe01fba1c43b4c6410ae6d3b9b710be075f9501863222503

|

| 3 |

+

size 1815217640

|

model-00003-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:06c6e7697ba1da1059f154a5cb8e1fe849210ba9fc6422ca7b3b2dfebba06d87

|

| 3 |

+

size 1968291912

|

model-00004-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2233bfa88b4179f9f2d7cd95db7cbe2cec574196c31cdb58162315ad151409fa

|

| 3 |

+

size 1927406992

|

model-00005-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ea209d70ada3b9a68d1ced8864bb95216cbf318630e9a9086cd570d734d66f71

|

| 3 |

+

size 1815217672

|

model-00006-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:30015b88745e032e03b6a0a9c1f8d66321d382ded3fdda0cf58fc4137005a6cf

|

| 3 |

+

size 1968291952

|

model-00007-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f7f4b8149c8e47c9e0f7d5685f777511e51cf339bc9fdabf8e87b5754d048721

|

| 3 |

+

size 1927406992

|

model-00008-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:db41135b5f9a620cdecca42655397655c71ca00663b2e5ac0858224bd46231eb

|

| 3 |

+

size 1815217672

|

model-00009-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:862ddc7af9ab395e9fa0d4e5131a1023279a544c7a92ef3dc2a3fa32d375e83c

|

| 3 |

+

size 1968291952

|

model-00010-of-00010.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5074b7b69fc7ccc69a0b3c651a2f826ea7a1695dd562d7a7ce825168d1550b43

|

| 3 |

+

size 1649436712

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,291 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 18799902784

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"transformer.embedding.word_embeddings.weight": "model-00001-of-00010.safetensors",

|

| 7 |

+

"transformer.encoder.final_layernorm.weight": "model-00010-of-00010.safetensors",

|

| 8 |

+

"transformer.encoder.layers.0.input_layernorm.weight": "model-00001-of-00010.safetensors",

|

| 9 |

+

"transformer.encoder.layers.0.mlp.dense_4h_to_h.weight": "model-00001-of-00010.safetensors",

|

| 10 |

+

"transformer.encoder.layers.0.mlp.dense_h_to_4h.weight": "model-00001-of-00010.safetensors",

|

| 11 |

+

"transformer.encoder.layers.0.post_attention_layernorm.weight": "model-00001-of-00010.safetensors",

|

| 12 |

+

"transformer.encoder.layers.0.self_attention.dense.weight": "model-00001-of-00010.safetensors",

|

| 13 |

+

"transformer.encoder.layers.0.self_attention.query_key_value.bias": "model-00001-of-00010.safetensors",

|

| 14 |

+

"transformer.encoder.layers.0.self_attention.query_key_value.weight": "model-00001-of-00010.safetensors",

|

| 15 |

+

"transformer.encoder.layers.1.input_layernorm.weight": "model-00001-of-00010.safetensors",

|

| 16 |

+

"transformer.encoder.layers.1.mlp.dense_4h_to_h.weight": "model-00002-of-00010.safetensors",

|

| 17 |

+

"transformer.encoder.layers.1.mlp.dense_h_to_4h.weight": "model-00001-of-00010.safetensors",

|

| 18 |

+

"transformer.encoder.layers.1.post_attention_layernorm.weight": "model-00001-of-00010.safetensors",

|

| 19 |

+

"transformer.encoder.layers.1.self_attention.dense.weight": "model-00001-of-00010.safetensors",

|

| 20 |

+

"transformer.encoder.layers.1.self_attention.query_key_value.bias": "model-00001-of-00010.safetensors",

|

| 21 |

+

"transformer.encoder.layers.1.self_attention.query_key_value.weight": "model-00001-of-00010.safetensors",

|

| 22 |

+

"transformer.encoder.layers.10.input_layernorm.weight": "model-00003-of-00010.safetensors",

|

| 23 |

+

"transformer.encoder.layers.10.mlp.dense_4h_to_h.weight": "model-00003-of-00010.safetensors",

|

| 24 |

+

"transformer.encoder.layers.10.mlp.dense_h_to_4h.weight": "model-00003-of-00010.safetensors",

|

| 25 |

+

"transformer.encoder.layers.10.post_attention_layernorm.weight": "model-00003-of-00010.safetensors",

|

| 26 |

+

"transformer.encoder.layers.10.self_attention.dense.weight": "model-00003-of-00010.safetensors",

|

| 27 |

+

"transformer.encoder.layers.10.self_attention.query_key_value.bias": "model-00003-of-00010.safetensors",

|

| 28 |

+

"transformer.encoder.layers.10.self_attention.query_key_value.weight": "model-00003-of-00010.safetensors",

|

| 29 |

+

"transformer.encoder.layers.11.input_layernorm.weight": "model-00003-of-00010.safetensors",

|

| 30 |

+

"transformer.encoder.layers.11.mlp.dense_4h_to_h.weight": "model-00004-of-00010.safetensors",

|

| 31 |

+

"transformer.encoder.layers.11.mlp.dense_h_to_4h.weight": "model-00004-of-00010.safetensors",

|

| 32 |

+

"transformer.encoder.layers.11.post_attention_layernorm.weight": "model-00004-of-00010.safetensors",

|

| 33 |

+

"transformer.encoder.layers.11.self_attention.dense.weight": "model-00004-of-00010.safetensors",

|

| 34 |

+

"transformer.encoder.layers.11.self_attention.query_key_value.bias": "model-00004-of-00010.safetensors",

|

| 35 |

+

"transformer.encoder.layers.11.self_attention.query_key_value.weight": "model-00004-of-00010.safetensors",

|

| 36 |

+

"transformer.encoder.layers.12.input_layernorm.weight": "model-00004-of-00010.safetensors",

|

| 37 |

+

"transformer.encoder.layers.12.mlp.dense_4h_to_h.weight": "model-00004-of-00010.safetensors",

|

| 38 |

+

"transformer.encoder.layers.12.mlp.dense_h_to_4h.weight": "model-00004-of-00010.safetensors",

|

| 39 |

+

"transformer.encoder.layers.12.post_attention_layernorm.weight": "model-00004-of-00010.safetensors",

|

| 40 |

+

"transformer.encoder.layers.12.self_attention.dense.weight": "model-00004-of-00010.safetensors",

|

| 41 |

+

"transformer.encoder.layers.12.self_attention.query_key_value.bias": "model-00004-of-00010.safetensors",

|

| 42 |

+

"transformer.encoder.layers.12.self_attention.query_key_value.weight": "model-00004-of-00010.safetensors",

|

| 43 |

+

"transformer.encoder.layers.13.input_layernorm.weight": "model-00004-of-00010.safetensors",

|

| 44 |

+

"transformer.encoder.layers.13.mlp.dense_4h_to_h.weight": "model-00004-of-00010.safetensors",

|

| 45 |

+

"transformer.encoder.layers.13.mlp.dense_h_to_4h.weight": "model-00004-of-00010.safetensors",

|

| 46 |

+

"transformer.encoder.layers.13.post_attention_layernorm.weight": "model-00004-of-00010.safetensors",

|

| 47 |

+

"transformer.encoder.layers.13.self_attention.dense.weight": "model-00004-of-00010.safetensors",

|

| 48 |

+

"transformer.encoder.layers.13.self_attention.query_key_value.bias": "model-00004-of-00010.safetensors",

|

| 49 |

+

"transformer.encoder.layers.13.self_attention.query_key_value.weight": "model-00004-of-00010.safetensors",

|

| 50 |

+

"transformer.encoder.layers.14.input_layernorm.weight": "model-00004-of-00010.safetensors",

|

| 51 |

+

"transformer.encoder.layers.14.mlp.dense_4h_to_h.weight": "model-00004-of-00010.safetensors",

|

| 52 |

+

"transformer.encoder.layers.14.mlp.dense_h_to_4h.weight": "model-00004-of-00010.safetensors",

|

| 53 |

+

"transformer.encoder.layers.14.post_attention_layernorm.weight": "model-00004-of-00010.safetensors",

|

| 54 |

+

"transformer.encoder.layers.14.self_attention.dense.weight": "model-00004-of-00010.safetensors",

|

| 55 |

+

"transformer.encoder.layers.14.self_attention.query_key_value.bias": "model-00004-of-00010.safetensors",

|

| 56 |

+

"transformer.encoder.layers.14.self_attention.query_key_value.weight": "model-00004-of-00010.safetensors",

|

| 57 |

+

"transformer.encoder.layers.15.input_layernorm.weight": "model-00004-of-00010.safetensors",

|

| 58 |

+

"transformer.encoder.layers.15.mlp.dense_4h_to_h.weight": "model-00005-of-00010.safetensors",

|

| 59 |

+

"transformer.encoder.layers.15.mlp.dense_h_to_4h.weight": "model-00004-of-00010.safetensors",

|

| 60 |

+

"transformer.encoder.layers.15.post_attention_layernorm.weight": "model-00004-of-00010.safetensors",

|

| 61 |

+

"transformer.encoder.layers.15.self_attention.dense.weight": "model-00004-of-00010.safetensors",

|

| 62 |

+

"transformer.encoder.layers.15.self_attention.query_key_value.bias": "model-00004-of-00010.safetensors",

|

| 63 |

+

"transformer.encoder.layers.15.self_attention.query_key_value.weight": "model-00004-of-00010.safetensors",

|

| 64 |

+

"transformer.encoder.layers.16.input_layernorm.weight": "model-00005-of-00010.safetensors",

|

| 65 |

+

"transformer.encoder.layers.16.mlp.dense_4h_to_h.weight": "model-00005-of-00010.safetensors",

|

| 66 |

+

"transformer.encoder.layers.16.mlp.dense_h_to_4h.weight": "model-00005-of-00010.safetensors",

|

| 67 |

+

"transformer.encoder.layers.16.post_attention_layernorm.weight": "model-00005-of-00010.safetensors",

|

| 68 |

+

"transformer.encoder.layers.16.self_attention.dense.weight": "model-00005-of-00010.safetensors",

|

| 69 |

+

"transformer.encoder.layers.16.self_attention.query_key_value.bias": "model-00005-of-00010.safetensors",

|

| 70 |

+

"transformer.encoder.layers.16.self_attention.query_key_value.weight": "model-00005-of-00010.safetensors",

|

| 71 |

+

"transformer.encoder.layers.17.input_layernorm.weight": "model-00005-of-00010.safetensors",

|

| 72 |

+